Key Takeaways — Open-Source Tools for Builders

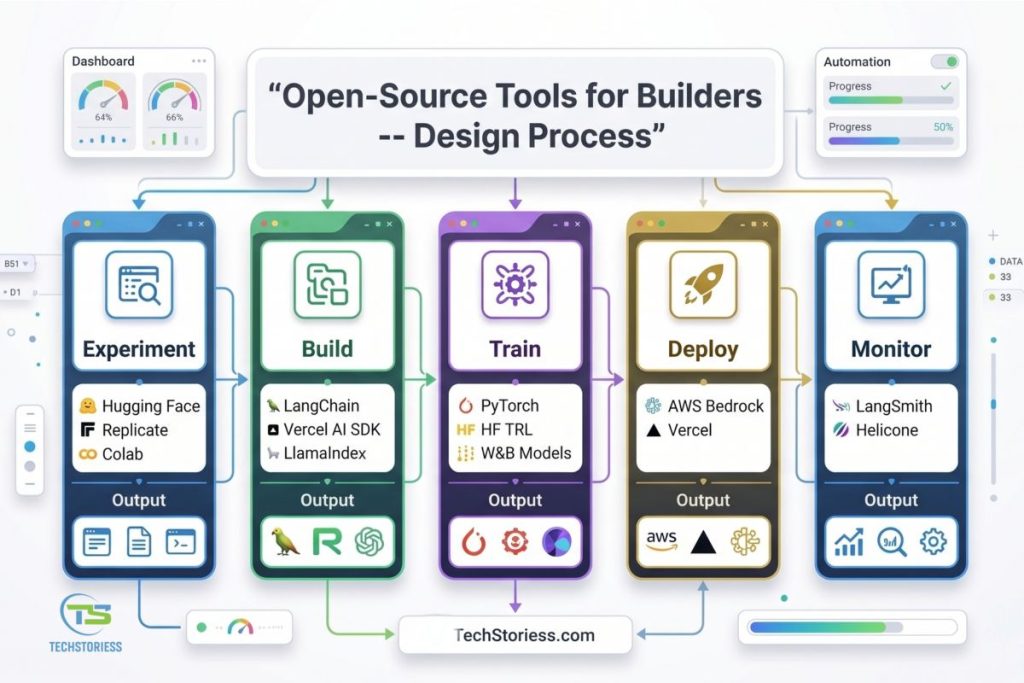

- Open-Source Tools for Builders are generally be divided into 5 stages: Experiment, Build, Train, Deploy, and Monitor. By ensuring the correct tool is deployed at every tier, one can eliminate wastage, redundancy, and scalability issues.

- The Hugging Face requirements (158K+ stars on GitHub) and LangChain (130K+ stars) are among the most popular open-source platforms for hosting, fine-tuning, and orchestrating models into an LLCM.

- One of the fastest-growing toolkits today for building AI-powered web applications, particularly in the TypeScript ecosystem, is the Vercel AI SDK (22K+ stars, 20M+ monthly downloads).

- Cloud deployment tools such as AWS Bedrock and Weights and Biases ease infrastructure and engineer reduce overheads. Nevertheless, they could lead to vendor lock-in, which SMBs need to consider before scaling.

- Replicate (now integrated with Cloudflare) is a pay-per-second GPU inference service, making it well-suited for rapid prototyping. Nevertheless, the cold-start latency can affect the production-level performance.

🚀 Ready to build? Most tools have generously offered free versions. Use Hugging Face, LangChain, or the Vercel AI SDK to quickly get your first AI-powered feature up and running, then expand on it.

- Key Takeaways — Open-Source Tools for Builders

- 1. Introduction

- 2. What Are Open-Source Tools for Builders and Why Do They Matter?

- Why It Matters More Than Ever to Choose Tools to Use

- 3. Key Features to Look For

- 4. Developer Workflow Map

- 5. Open-Source Tools for Builders Tools – Detailed Breakdown

- 6. Comparison Matrix

- 7. Open-Source vs. Managed – Strengths and Limitations

- 8. Best Use Cases – Stack Configurations

- 9. Expert Recommendations

- 10. Future Trends (2026–2027)

- 11. Frequently Asked Questions

- 12. Conclusion

1. Introduction

The Open-Source Tools for Builders have changed in a short time. With seamless co-operation with suitable structures and layers, what was once an academic requirement for a PhD and weeks of machine-learning infrastructure can now be done in a weekend.

But this is where the problem emerges: which tools deserve a place in your workflow?

The guide is targeted at developers, science human resources, technical founders, and DevOps groups who may review AI tools and machine learning SDKs in 2026. It does not use tools without thinking; it considers their usefulness in real-world applications.

- Documentation quality

- Pricing transparency

- Community strength

- Integration flexibility

- Production readiness

Experiment → Build → Train → Deploy → Monitor.

This structure will aid in proper tool choice at the right moment- you will be able to build your process with less time, scale with more intelligence, and prevent expensive mistakes.

2. What Are Open-Source Tools for Builders and Why Do They Matter?

Open-Source Tools for Builders include service-oriented technologies, frameworks, and SDKs that facilitate the development, training, and deployment of machine learning models and AI applications. Such tools include modelling training, experimentation, production deployment and monitoring.

PyTorch is an open-source framework used. Simply put, they serve as the underpinnings of current AI systems, significantly accelerating the idea-to-production process in modern teams.

Why It Matters More Than Ever to Choose Tools to Use

There are three key changes that have turned the issue of tool selection into a vital one:

1. Radical Reduction in Cost of Inference

Compared to the end of 2022, inference prices are now almost 1,000x cheaper, making production-scale AI and GPU infrastructure affordable to SMBs as well. Scalability was once prohibitively costly.

2. Rise of Agentic AI Workflows

AI systems have ceased to be one-step predictions. Using agentic AI, there is now multi-step reasoning, tool utilisation, and independent decision-making, which together form a strong orchestration layer.

3. Raising Regulatory and Compliance Pressure.

The new requirements for companies are audit trails, reproducibility, and the ability to trace experiments. The nature of manual workflow cannot easily scale in controlled or business contexts.

3. Key Features to Look For

In the assessment of Open-Source Tools for Builders, the factors to be considered are those that influence the capabilities of scalability, flexibility, and production readiness directly:

- Model Provider Flexibility: The power to change providers (e.g. OpenAI, Anthropic, or open-weight models) without requiring any changes in the underlying application implementation. This will avoid vendor lock-in and allow costs to be flexible.

- Streaming and Real-Time Support: Server support of server-sent events (SSE), WebSockets, and edge support guarantees low response time- important to chatbots, pilot copilots and real-time intelligent applications.

- Experiment Tracking: The tools you use must enable you to record hyperparameters, visually compare across runs, and reliably reproduce experiments. This is necessary to enhance model performance and the developer experience (DevEx).

- Agent Orchestration: The ability to transition to production APIs with features such as auto-scaling and scale-to-zero eliminates the complexity of managing DevOps.

- Deployment Abstraction: Notebook to production API with auto-scaling and scale-to-zero on model serving infrastructure.

- Observability: Find trace logging, latency monitoring, and token cost tracking. These contribute to debugging, performance, and cost optimisation.

- Documentation Quality: Documentation, real-world examples of its use, and community support reduce the time and hassle of onboarding when developing.

4. Developer Workflow Map

Every AI project moves through five stages. Understanding where each tool fits prevents overlap and gaps in your machine learning pipeline.

| Stage | Activity | Key Tools | Output |

| Experiment | Model selection, data exploration, prototyping | Hugging Face, Replicate, Colab | Validated hypothesis |

| Build | App architecture, prompts, RAG pipelines | LangChain, Vercel AI SDK, LlamaIndex | Working prototype |

| Train | Fine-tuning, RLHF, custom training | PyTorch, HF TRL, W&B Models | Production model |

| Deploy | API serving, edge deploy, auto-scaling | Replicate, AWS Bedrock, Vercel | Live endpoint |

| Monitor | Tracking, cost monitoring, and drift detection | W&B, LangSmith, Helicone | Optimization insights |

5. Open-Source Tools for Builders Tools – Detailed Breakdown

5.1 Hugging Face

Complemented as the reactionary nucleus of open-source AI, Hugging Face has been a standard foundation for contemporary ML processes. With more than 1 million models hosted, 500,000+ datasets, and its popular, widely used Transformers library (158K+ GitHub stars), it supports over 12 million users a day and serves 15 million+ model downloads.

Key Features:

- Model Hub: Infer and deploy models in a single click.

- Transformers Library: The best support for NLP, vision and audio work.

- AutoTrain: No-code and low-code fine-tuning.

- Spaces: Quickly build and share interactive ML demos

- Inference Endpoints: Scalable APIs for production deployment

- TRL (Transformer Reinforcement Learning): Tools for RLHF and alignment workflows

Pricing:

- Free Tier: Community access with usage limits

- Pro Plan: Starts at $9/month

- Inference Endpoints: Since around $0.06/hour (CPU- based)

- Enterprise: Special quotations by volume and use

Strengths:

- Model experiment and rapid prototyping..

- Optimisation of the finishing process and research.

- Creation and presentation of ML illustrations.

Limitations:

- Free tier includes rate limits and shared infrastructure constraints

- Enterprise costs can scale quickly with heavy usage

- Less prescriptive guidance for application architecture (requires developer decisions)

Best For: Communication would interest ML engineers, AI researchers, and groups using open-source models.

Quick Start – Hugging Face Inference (Python):

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

result = classifier("techstoriess reviews are incredibly useful!")

print(result) # [{'label': 'POSITIVE', 'score': 0.9998}]Source: huggingface.co/docs/transformers

5.2 LangChain

The natural standard for developing LLM-driven applications has become LangChain, which has over 130,000 stars on GitHub and a growing user base. It provides modular abstractions for the management, calling, and instantiation of tools and agent processes and is used to build more complex AI systems.

Key Features:

- Deep Integrations: 100-plus integrations with the tools and APIs, data sources.

- Inbuilt RAG Support: Inbuilt connectors to such vector databases as Pinecone, Chroma, and Weaviate.

- Google chatbots and conversation AI.

Pricing: Core Framework: Open-source (MIT License)

- LangSmith:

- Free tier for development

- Paid plans for Teams and Enterprise usage

Strengths:

- Largest and most active community for LLM orchestration

- Frequent updates and weekly releases

- Strong ecosystem with deep integrations across the AI stack

Limitations:

- Abstraction layers can make debugging more complex

- Slight performance overhead compared to direct API integrations

Best For:

- Retrieval-Augmented Generation (RAG) applications.

- Google chatbots and conversation AI.

Quick Start – LangChain RAG Chain (Python):

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

llm = ChatOpenAI(model="gpt-4o")

prompt = ChatPromptTemplate.from_template("Summarize: {text}")

chain = prompt | llmSource: python.langchain.com/docs

5.3 Weights & Biases (W&B)

Weights & Biases (W&B) is one of the most popular platforms for monitoring and tracking the performance of an ML experiment. Weights & Biases (W&B) has over 300 organisations and 30+ foundation model builders using it. It offers a single platform for tracking experiments, visualising performance, and controlling these models throughout the ML life cycle.

Its ecosystem includes:

- Weave: For LLM evaluation, tracing, and debugging

- Models: For training workflows and model registry management

- Inference (via CoreWeave): Scalable hosted inference infrastructure

Key Features:

- Real-Time Experiment Dashboards: Visualisation to track metrics, logs and model performance.

- Hyperparameter Sweeps: Scalability, Automatic automation of large-scale experimentation and optimisation.

- Model Registry: Version, administrative, and deploy models in a manner that can be reproduced.

- Weave for LLM Observability: Trace prompts, responses, and system behaviour.

- Hosted Inference: Deploy and scale models with managed infrastructure.

Pricing:

- Free Tier: Personal use with up to 5GB storage

- Teams Plan: Begins at $50/user/month (includes 100GB storage)

- Enterprise: Custom pricing consistently includes SSO, compliance (including HIPAA), and advanced security.

Strengths:

- Experiment and model performance visualisation. Best-in-class visualisation of experiments and model performance

- Firm ties with PyTorch, Lightning and Hugging Face execution

- Generous free tier for researchers and academic use

Limitations:

- Per-seat pricing can become expensive as teams scale

- Lacks native pipeline orchestration (often requires external tools)

- Occasional latency in cloud-based dashboards

Best For:

- Experiment tracking and performance monitoring

- Model training workflows and optimisation

- LLM evaluation and observability

Ideal users: ML teams, research groups, and organisations scaling AI systems in production.

Quick Start – W&B Experiment Tracking (Python):

import wandb

wandb.init(project="my-ai-project")

wandb.log({"loss": 0.34, "accuracy": 0.91, "epoch": 5})

wandb.finish()5.4 Vercel AI SDK

The Vercel AI SDK is a free, open-source TypeScript library for developing AI-driven web applications today. It has already attracted 22,800+ GitHub stars and more than 20 million monthly npm downloads, and is fast becoming popular with frontend and full-stack developers.

There were significant enhancements in 6 (2026), including support for native agents, full MCP (Model Context Protocol), reranking, and DevTools. It is already used by companies such as Thomson Reuters, Clay, and Fortune 500 teams.

Key Features:

- Provider-Agnostic API: Visibly change model provider without altering fundamental logic.

- ToolLoopAgent: Built-in support for agent workflows and tool execution

- AI Gateway: Handles failover, retries, and multi-provider routing

- Frontend Framework Hooks: Native support for React, Svelte, and Vue

- DevTools: Debug, inspect, and optimise LLM interactions in real time.

Pricing:

- Completely Free: Apache 2.0 Open-source.

- Cost Model: Subscribe to external model providers APIs only (e.g., OpenAI, Anthropic)

Strengths:

- Minimal abstraction overhead—feels natural for TypeScript developers

- First-class integration with Next.js and modern frontend stacks

- Designed to be provider-agnostic from the ground up

Limitations:

- Limited to TypeScript/JavaScript ecosystems

- Not suitable for model training or deep ML workflows

- Smaller ecosystem compared to mature Python-based frameworks like LangChain

Best For: AI-powered web applications and SaaS products

- Streaming chat interfaces and copilots

- Full-stack agent-based applications

Ideal users: Low-level users, TypeScript writers, Frontend software theorists, and full-stack teams who operate with contemporary web frameworks.

Quick Start – Vercel AI SDK (TypeScript):

import { generateText } from 'ai';

const { text } = await generateText({

model: 'anthropic/claude-sonnet-4-20250514',

prompt: 'Explain RAG in one paragraph.'

});Source: sdk.vercel.ai/docs

5.5 AWS Bedrock

AWS Bedrock is Amazon’s managed service for accessing and deploying foundation models, including offerings from Anthropic (Claude), Meta (Llama), Cohere, and Amazon Nova. It is closely compatible with key AWS features, including S3, Lambda, SageMaker, and IAM, and is a strong solution for enterprise environments.

Key Features:

- Multi-Provider Model API: Access multiple foundation models through a unified interface

- Knowledge Bases: Built-in managed RAG pipelines with data ingestion and retrieval

- Guardrails: Enforce safety, compliance, and output filtering

- Agents for Automation: Build task-oriented workflows with minimal orchestration overhead

- Native AWS Integration: Deep compatibility with AWS security, storage, and compute services

Pricing:

- Usage-Based: Pay-per-token pricing model

- Example: Claude Sonnet ~ $3 per million input tokens and ~$15 per million output tokens

- Provisioned Throughput: Available for predictable workloads and latency control

Strengths:

- Enterprise-grade compliance (SOC 2, HIPAA, ISO 27001)

- Seamless integration within AWS ecosystems

- Built-in safety and governance controls

Limitations:

- Strong vendor lock-in within AWS infrastructure

- Slower innovation compared to open-source ecosystems

- Pricing can be complex and less transparent at scale

Best For:

- Enterprise AI applications and regulated industries

- Organisations already operating within AWS

5.6 Replicate

Replicate is a cloud-based AI inference platform (acquired by Cloudflare in 2025) that simplifies running models via one-line API calls. It hosts 50,000+ community models and a curated set of production-ready models, making it highly accessible for developers.

Key Features:

- One-Line Inference API: Run models instantly without infrastructure setup

- Cog Packaging: Containerise and deploy custom models easily

- Pay-Per-Second GPU Billing: Only pay for actual compute usage

- Fine-Tuning Support: Customise models for specific tasks

- Auto Scale-to-Zero: Eliminates idle infrastructure costs

Pricing:

- CPU: ~$0.10/hour

- T4 GPU: ~$0.55/hour

- A40 GPU: ~$1.10/hour

- A100 GPU: ~$2.30/hour

- Free trial credits available

Strengths:

- Extremely low barrier to entry for model deployment

- Large marketplace of ready-to-use models

- Cost-efficient for short workloads due to scale-to-zero

Limitations:

- Cold start latency (10–180 seconds)

- Costs can become unpredictable at scale

- Cloud-only (no self-hosting options)

Best For:

- Rapid prototyping and experimentation

- Image, video, and generative AI use cases

Ideal users: Indie developers, startups, and early-stage product teams.

5.7 PyTorch

PyTorch, developed by Meta AI Research, is the dominant deep learning framework powering a majority of modern AI research and production systems. It serves as the foundation for many tools in this ecosystem.

Key Features:

- Dynamic Computation Graphs: A flexible and intuitive model-building

- torch. compile: Performance optimisation introduced in PyTorch 2.x

- Distributed Training: Multi-GPU and large-scale model training support

- TorchServe: Production-ready model serving

- Ecosystem Libraries: TorchVision, TorchAudio, TorchText

Pricing:

- Free and open-source (BSD License)

- Infrastructure costs depend on the compute environment (cloud/on-premise)

Strengths:

- Industry-standard framework with massive adoption

- Highly flexible for research and custom architectures

- Backed by one of the largest AI research communities

Limitations:

- Requires strong ML and deep learning expertise

- No built-in experiment tracking or MLOps pipeline

- Steep learning curve for beginners

Best For:

- Custom model development and research

- Deep learning experimentation and training

Ideal users: ML researchers, AI engineers, and deep learning specialists.

5.8 LlamaIndex

LlamaIndex is a data framework that connects LLMs to external data sources, making it a critical layer for building Retrieval-Augmented Generation (RAG) applications.

Key Features:

- 160+ Data Connectors: Ingest data from APIs, databases, and documents

- Flexible Indexing: Supports vector, keyword, and graph-based indexing

- Query Engines: Generate responses with citations and context

- LlamaParse: Advanced document parsing for structured extraction

- LlamaCloud: Managed RAG infrastructure for production use

Pricing:

- Open-source (MIT License)

- LlamaCloud: Usage-based pricing

- LlamaParse: Free tier available

Strengths:

- Best-in-class data layer for RAG applications

- Opinionated design simplifies complex workflows

- Strong document parsing capabilities

Limitations:

- Narrower scope compared to full orchestration tools like LangChain

- Smaller community ecosystem

- Cloud pricing may vary with usage

Best For:

- RAG pipelines and document-based AI systems

- Knowledge retrieval and Q&A systems

Ideal users: Data engineers, AI engineers, and knowledge system builders.

VAI Score: 88/100 – Strong specialisation. Deductions for a narrower scope.

5.9 MLflow

MLflow, developed by Databricks, is an open-source platform for managing the ML lifecycle, including experiment tracking, model packaging, and deployment across environments.

Key Features:

- Experiment Tracking: Log metrics, parameters, and artefacts

- MLmodel Format: Standardised packaging for reproducibility

- Model Registry: Manage lifecycle stages (staging, production)

- Multi-Platform Deployment: Deploy across cloud, on-prem, or hybrid setups

Pricing:

- Free and open-source (Apache 2.0)

- Managed MLflow available via Databricks

- Self-hosting available at no cost (infra required)

Strengths:

- Vendor-neutral and multi-cloud friendly

- Strong governance and model lifecycle management

- Easy to self-host and customise

Limitations:

- UI is less modern compared to W&B

- LLM-specific features are still evolving

- Self-hosting requires maintenance and DevOps effort

Best For:

- End-to-end ML lifecycle management

- Multi-cloud and governance-focused setups

Ideal users: Platform teams, MLOps engineers, and enterprise data teams.

5.10 Gradio (by Hugging Face)

Gradio is a lightweight Python library for building interactive ML demos with minimal code. It is the default interface tool for Hugging Face Spaces and is widely used for showcasing models.

Key Features:

- Rapid UI Creation: Build demos in under 10 lines of code

- Multimodal Support: Text, image, audio, and video inputs/outputs

- One-Click Sharing: Instantly share demos via public links

- Spaces Integration: Deploy seamlessly on Hugging Face

- Auto API Generation: Convert demos into usable APIs

Pricing:

- Free and open-source (Apache 2.0)

- GPU-powered Spaces start at ~$0.60/hour

Strengths:

- Fastest way to turn models into interactive demos

- No frontend development required

- Ideal for testing and showcasing ideas

Limitations:

- Not suitable for production-grade applications

- Limited UI customization options

- Performance constraints under heavy usage

Best For:

- Model demos and prototypes

- Internal tools and quick validation

Ideal users: Researchers, data scientists, and ML practitioners.

Quick Start – Gradio Demo (Python):

import gradio as gr

def greet(name): return f"Hello {name}, welcome to TechStoriess!"

gr.Interface(fn=greet, inputs="text", outputs="text").launch()6. Comparison Matrix

| Tool | Stage | Pricing | Open Src | GH Stars | Best For |

| Hugging Face | Experiment | Freemium | Yes | 158K+ | Models |

| LangChain | Build | Open Source | Yes | 130K+ | LLM Apps |

| W&B | Monitor | $50/user/mo | No | N/A | Tracking |

| Vercel AI SDK | Build | Free | Yes | 22.8K+ | Web AI |

| AWS Bedrock | Deploy | Pay/token | No | N/A | Enterprise |

| Replicate | Deploy | Pay/sec | No | N/A | Prototyping |

| PyTorch | Train | Free | Yes | 88K+ | Training |

| LlamaIndex | Build | Open Source | Yes | 40K+ | RAG |

| MLflow | Monitor | Free | Yes | 19K+ | MLOps |

| Gradio | Experiment | Free | Yes | 36K+ | Demos |

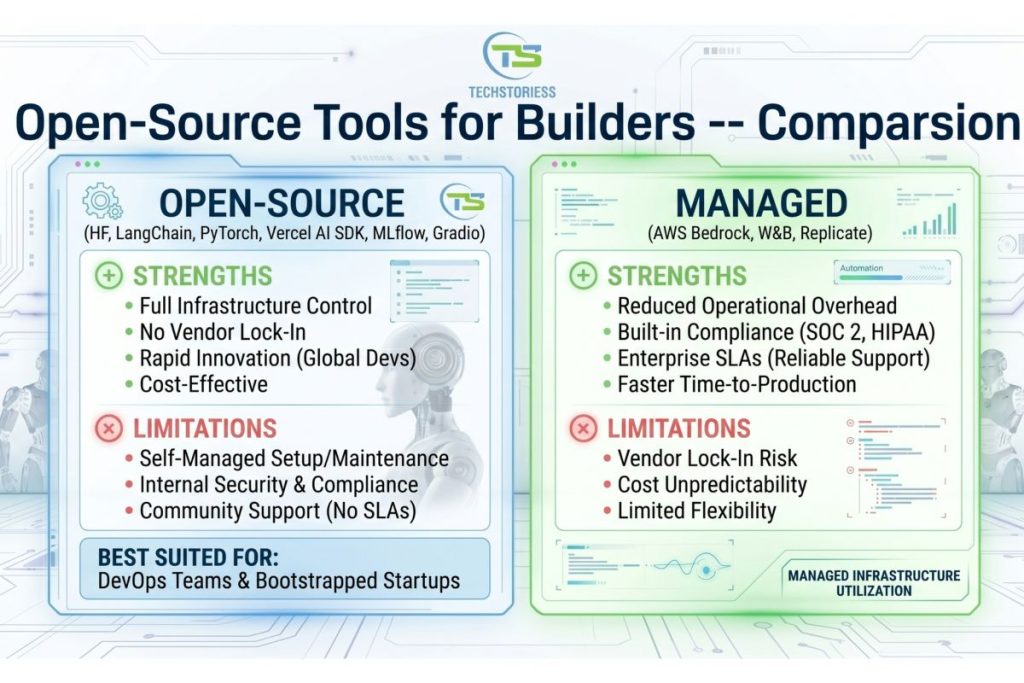

7. Open-Source vs. Managed – Strengths and Limitations

Open-Source (HF, LangChain, PyTorch, Vercel AI SDK, MLflow, Gradio)

Strengths:

- Full Infrastructure Control: Customise every layer of your stack

- No Vendor Lock-In: Switch providers or architectures freely

- Rapid Innovation: Driven by active global developer communities

- Cost-Effective: Most tools are free or low-cost to start

Best suited for: Teams with DevOps expertise and startups operating on tight budgets.

Limitations:

- Self-Managed Infrastructure: Requires setup, scaling, and maintenance

- Security & Compliance Responsibility: Must handle governance internally

- No Guaranteed Support: Community-driven help without SLAs

Managed (AWS Bedrock, W&B, Replicate)

Strengths:

- Reduced Operational Overhead: No need to manage infrastructure

- Built-in Compliance: Supports standards like SOC 2, HIPAA

- Enterprise SLAs: Reliable uptime and support

- Faster Time-to-Production: Pre-built systems accelerate deployment

Limitations:

- Vendor Lock-In Risk: Harder to switch platforms later

- Cost Unpredictability: Per-seat or per-token pricing can scale quickly

- Limited Flexibility: Less control over architecture and customisation

8. Best Use Cases – Stack Configurations

NLP Pipeline (Chatbot / Knowledge Assistant)

Recommended Stack:

- LangChain

- Hugging Face (Embeddings)

- Vector DB: Pinecone / Chroma

- Weights & Biases

Estimated Cost: $200–$800/month

Why this works:

Combines strong orchestration (LangChain) with flexible embeddings and reliable experiment tracking for iterative improvement.

Computer Vision Application

Recommended Stack:

- PyTorch

- Hugging Face Transformers (ViT, DETR)

- Weights & Biases

- Replicate

Estimated Cost: $500–$2,000/month

Why this works:

PyTorch handles training, Hugging Face accelerates model access, and Replicate simplifies inference without heavy infrastructure setup.

LLM-Powered Web Application

Recommended Stack:

- Vercel AI SDK

- Next.js

- Claude / OpenAI via AI Gateway

- LangSmith

Estimated Cost: $100–$1,500/month

Why this works:

Optimised for frontend + backend integration, enabling fast deployment of real-time AI features like chat and copilots.

Enterprise AI (Regulated Industry)

Recommended Stack:

- AWS Bedrock

- LlamaIndex

- MLflow

- Weights & Biases

Estimated Cost: $2,000–$15,000+/month

Why this works: Combines compliance (AWS), data orchestration (LlamaIndex), governance (MLflow), and tracking (W&B) for enterprise-grade AI systems.

9. Expert Recommendations

Solo Founders

Start with Vercel AI SDK and Hugging Face free tier. These offer the fastest way to prototype and validate ideas. Use Gradio to quickly build and share demos.

Startup Teams (5–20)

Use LangChain or LlamaIndex for orchestration, Weights & Biases for tracking, and Replicate for rapid testing. Once product-market fit is validated, consider moving to self-hosted inference to optimise costs.

Enterprise Teams

Adopt AWS Bedrock for compliance, MLflow for governance, and Weights & Biases for training oversight. Plan for dedicated ML platform engineering resources early.

Research Teams

PyTorch remains essential for experimentation. Combine it with Hugging Face Transformers to speed up iteration.

Leverage W&B’s free academic tier for enterprise-grade tracking at zero cost.

10. Future Trends (2026–2027)

Agentic Frameworks Become Standard

Frameworks like LangGraph and Vercel’s ToolLoopAgent are making multi-step autonomous agents a core part of Open-Source Tools. Expect native agent support across most platforms by late 2026.

Continued Drop in Inference Costs

Advances in hardware (e.g., next-gen GPUs), optimisation frameworks (vLLM, SGLang), and model distillation will continue to reduce costs—making AI accessible even for micro-SaaS businesses.

FinOps for AI Becomes Critical

As usage scales, teams will rely on:

- Token-level cost tracking

- Semantic caching

- Intelligent model routing

Cost optimisation will become a core engineering function rather than an afterthought.

MCP (Model Context Protocol) Adoption

MCP is emerging as a standard for connecting models with tools and external systems. With early adoption already visible, widespread standardisation is expected by the end of 2026.

Open-Source Momentum Accelerates

Open-source AI continues to close the gap with proprietary systems. Models from ecosystems such as Hugging Face and others are rapidly improving, while enterprise adoption continues to rise.

11. Frequently Asked Questions

What are Open-Source Tools for Builders?

Software platforms, frameworks, and SDKs that help teams build, train, deploy, and monitor ML models and AI applications – from open-source libraries like PyTorch to managed services like AWS Bedrock.

Which Open-Source tools is best for beginners?

Hugging Face Inference API and the Vercel AI SDK offer the lowest barriers. You can add AI features with under 10 lines of code. Gradio is excellent for building quick interactive demos.

Are open-source AI tools production-ready?

Yes. PyTorch, HF Transformers, LangChain, and Vercel AI SDK run in production at Fortune 500 companies. Readiness depends on your team’s expertise in infrastructure.

How much do Open-Source Tools for Builders cost?

Many core tools are free (PyTorch, LangChain, Vercel AI SDK, MLflow, Gradio). Managed services start at $50/user/month (W&B). Total cost depends on API usage, GPU compute, and team size.

Can I switch AI development tools later without losing work?

Tools with open standards reduce switching risk: MLflow’s model format, HF’s Model Hub, and Vercel AI SDK’s provider-agnostic API are designed for portability. Proprietary platforms create more lock-in.

What is the difference between LangChain and LlamaIndex?

LangChain is a broad orchestration framework for LLM applications with agents, chains, and tool integrations. LlamaIndex specialises in data ingestion, indexing, and retrieval for RAG. Many teams use both: LlamaIndex for data retrieval, LangChain for application logic.

Is Hugging Face free for commercial use?

Yes. The Transformers library is Apache-2.0-licensed, fully permitting commercial use. The free Inference API has rate limits, but paid Inference Endpoints and Pro plans remove those for production workloads.

How do I deploy an AI model to production without managing servers?

Replicate and AWS Bedrock both offer serverless deployment of AI models. Replicate provides pay-per-second inference with auto-scaling. Bedrock offers managed endpoints with enterprise compliance. Vercel AI SDK deploys AI features to edge networks with zero infrastructure management.

What is the cheapest way to run AI inference in 2026?

For prototyping, Hugging Face’s free Inference API and Replicate’s trial credits offer zero-cost entry. For production, self-hosting open-weight models on GPU cloud providers (Lambda Labs, RunPod) costs 40–60% less than managed API pricing at scale.

Can I use multiple AI development tools together?

Yes. Most production AI stacks combine 2–4 tools. A typical setup uses Hugging Face for models, LangChain for orchestration, W&B for tracking, and Vercel AI SDK or AWS Bedrock for deployment. The tools in this guide are composable, not mutually exclusive.

12. Conclusion

The Open-Source Tools for Builders landscape in 2026 rewards deliberate selection over default choices. Hugging Face and PyTorch dominate the foundation layer. LangChain and LlamaIndex lead orchestration. Vercel AI SDK is the fastest path to shipping AI web apps. W&B sets the standard for tracking. AWS Bedrock covers enterprise compliance.

No single tool covers every stage of the workflow. The best teams combine two to four tools across the pipeline, choosing open-source defaults where possible and managed services where compliance demands.