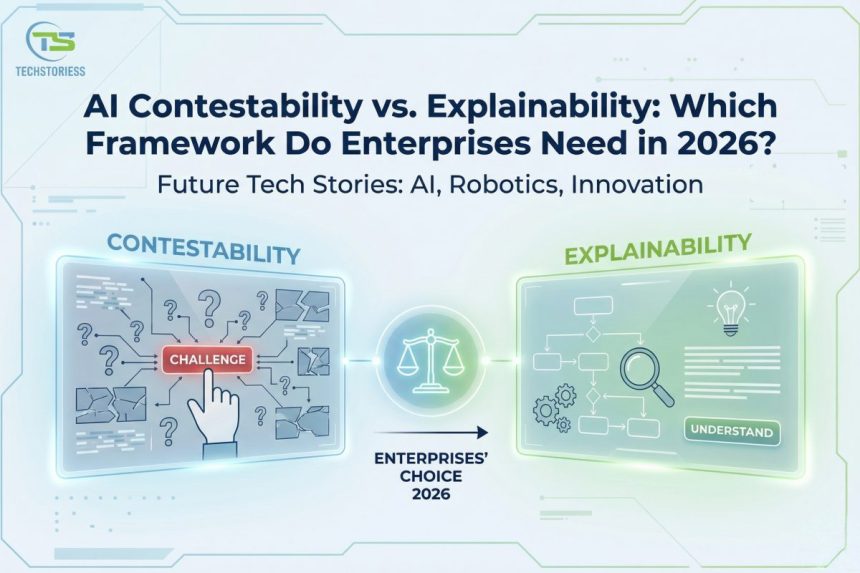

Artificial intelligence governance has entered a new phase. In 2026, enterprises no longer debate whether they need transparency—they debate whether transparency alone is enough. While explainable AI tools help organizations interpret model behavior, regulators, boards, and customers increasingly demand enforceable intervention mechanisms. This shift has elevated AI contestability from academic concept to enterprise requirement.

- The Conceptual Divide: What Explainable AI Solves (and What It Doesn’t)

- What AI Contestability Really Means in Enterprise Systems

- Regulatory Drivers in 2026: Why Explainability Alone Fails Compliance

- Operationalizing Contestable AI Systems: The Technical Architecture

- The Enterprise AI Governance Framework: Integrating Explainability and Contestability

- Real-World Lessons: Why Explainability Isn’t Enough

- Economic Risk Modeling: The Financial Case for Contestable AI

- AI Incident Response: When Automated Decisions Go Wrong at Scale

- Vendor and Third-Party Model Accountability

- Board-Level AI Risk Literacy: Governance Starts at the Top

- Cross-Functional Governance as a Structural Norm

- Actionable Implementation Framework: Turning Governance into Immediate Execution

- Immediate Strategic Insight

- Conclusion

- FAQ

Enterprises now build AI systems that influence credit approvals, hiring decisions, fraud detection, healthcare prioritization, and operational automation. When automated decisions affect rights, access, or financial outcomes, explanation alone does not satisfy accountability expectations. Organizations must enable challenge, review, and correction. The real question is no longer “Can we explain it?” but “Can we contest it?”

This article outlines the five-part framework enterprises must adopt in 2026—moving from transparency toward structurally contestable AI systems embedded within mature AI governance frameworks.

The Conceptual Divide: What Explainable AI Solves (and What It Doesn’t)

Explainable AI emphasizes interpretability by enabling organizations to understand model outputs, such as specific features that influenced a decision, probability confidence scores, or why a prediction shifted over time. It illuminates black-box models through established techniques such as feature importance rankings, SHAP values, and counterfactual explanations so that stakeholders can interpret how inputs shape outcomes.

However, interpretability does not guarantee accountability. It can explain the model’s logic but cannot alter the decision outcome. For instance, an explanation can describe why a model denied a particular loan application, but it cannot create a structured pathway that challenges or reverses that decision. It stops at clarifying reasoning without remediating harm.

This distinction becomes critical when automated outputs directly affect individuals. Transparency provides visibility; intervention and correction require contestability. Without procedural pathways, explainability risks remaining informational rather than operational. To convert transparency into accountability, organizations need structured intervention mechanisms.

Explainable AI should therefore be treated as a foundational pillar of governance rather than the entire framework.

- Explainability enhances model transparency but not enforcement authority.

- Interpretability tools illuminate reasoning without guaranteeing remediation.

- To achieve meaningful accountability, transparency must be paired with intervention capability that enables review and correction.

What AI Contestability Really Means in Enterprise Systems

AI contestability is a structured capability to challenge, review, and modify automated decisions. It requires technical, procedural, and governance layers that empower stakeholders to intervene. A contestable system logs decisions, preserves evidence, enables structured review, and supports corrective action.

In practical terms, contestability requires:

- Decision logging with tamper-evident immutable records.

- Clearly defined human-in-the-loop review thresholds.

- Formal appeal and redress workflows.

- Model rollback and version control capabilities.

A truly contestable system separates explanation from authority. It enables humans or independent oversight bodies to override automated outputs when necessary. It allows enterprises to shift AI from deterministic automation to supervised decision support.

This shift directly strengthens AI accountability. Organizations must not only describe decisions but demonstrate that they can rectify them.

- Contestability integrates enforceable oversight into production systems.

- Structured appeals reduce systemic bias exposure.

- Intervention mechanisms evolve automation into accountable augmentation.

Regulatory Drivers in 2026: Why Explainability Alone Fails Compliance

Global regulators have intensified oversight of high-impact AI systems. The EU AI Act mandates structured risk management, documentation, transparency, and human oversight for high-risk applications. Compliance frameworks increasingly evaluate governance processes rather than post-hoc explanation dashboards.

Under EU AI Act compliance, enterprises must document dataset provenance, validation methodologies, risk assessments, and oversight mechanisms. These criteria cannot be satisfied by an explanation interface alone. Regulators expect enforceable intervention processes and audit-ready documentation.

Similarly, frameworks from the National Institute of Standards and Technology emphasize lifecycle governance, traceability, risk management, and continuous monitoring. These standards reinforce that AI accountability requires institutional controls, not interpretability plug-ins.

Compliance pressure therefore reshapes system architecture. Enterprises must build environments that can:

- Log every decision with full version traceability.

- Demonstrate fairness testing and drift monitoring.

- Enable documented override mechanisms with escalation paths.

Regulation elevates explainable AI into a broader accountability infrastructure.

- Regulatory regimes prioritize governance structures over explanation alone.

- EU AI Act compliance demands documented oversight and risk controls.

- Auditability requires enforceable contestation workflows.

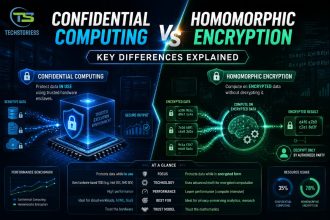

Operationalizing Contestable AI Systems: The Technical Architecture

To operationalize contestable AI systems, enterprises must embed governance controls directly into MLOps pipelines. Architecture must support traceability, fairness monitoring, and reversible deployment.

Decision Logging and Traceability

Systems must capture model inputs, feature transformations, version identifiers, and outputs in immutable storage. This logging enables forensic reconstruction and supports regulatory audits.

Human Oversight and Escalation

Organizations must define risk thresholds that trigger mandatory manual review. These thresholds ensure high-impact decisions receive appropriate scrutiny. Review interfaces must present explanation artifacts while preserving override authority.

Model Versioning and Rollback

Enterprises must maintain registries storing validation metrics, bias testing results, and deployment timestamps. During incidents of error, bias, or model drift, teams must execute controlled rollbacks to restore prior validated versions.

Continuous Monitoring for Algorithmic Fairness

Fairness metrics must track disparate impact, bias drift, and performance variance across demographic segments. Continuous validation enforces algorithmic fairness standards over time.

Independent Governance Layers

Oversight tooling should operate independently from inference systems. Structural separation minimizes conflicts of interest and strengthens audit credibility.

This architecture converts AI governance from documentation into production-grade control systems.

- Immutable logs facilitate enforceable AI accountability.

- Version control enables rapid and documented remediation.

- Continuous fairness monitoring institutionalizes ethical enforcement.

The Enterprise AI Governance Framework: Integrating Explainability and Contestability

Explainable AI and AI contestability are not competing models. They must be integrated into cohesive AI governance frameworks aligned with enterprise risk management.

Lifecycle Governance

Governance must extend across the entire AI lifecycle—from data ingestion and model development to deployment, monitoring, and retirement. Data validation, fairness testing, performance evaluation, and monitoring must operate as continuous feedback loops.

Executive Oversight

Boards increasingly evaluate AI risk posture as part of enterprise governance. Risk dashboards must quantify exposure levels, fairness variance, override frequency, and appeal resolution metrics.

Independent Validation

Separate model risk teams must challenge assumptions, review bias testing, and document formal approvals. Independence reinforces institutional accountability.

Harmonized Global Compliance

Multinational enterprises should align governance baselines with the EU AI Act and other regulatory standards. Regulatory harmonization reduces compliance fragmentation and operational inconsistency.

Measurable KPIs

Governance must rely on quantitative metrics: explainability coverage, appeal response times, fairness variance thresholds, override frequency, and drift detection intervals. Measurement converts governance commitments into enforceable discipline.

By integrating explainability and contestability, enterprises build resilient and defensible AI systems. Transparency clarifies decisions; contestability enables correction. Together, they establish accountable automation aligned with regulatory, ethical, and operational expectations.

Real-World Lessons: Why Explainability Isn’t Enough

While explainability introduces transparency by interpreting AI behavior, real-world incidents demonstrate that transparent systems alone are insufficient to prevent harm or enforce accountability.

In hiring and recruitment, automated systems have repeatedly revealed demonstrable bias rooted in skewed training data. Amazon’s AI recruiting tool, trained on historical resumes submitted over a ten-year period, systematically downgraded female candidates. It penalized resumes that included terms associated with women’s organizations. After internal audits revealed discriminatory patterns, the project was ultimately discontinued.

Similarly, recent litigation against Workday alleges that its AI-based applicant screening tools discriminated against applicants based on age, race, and disability. U.S. courts certified a collective action lawsuit, directly challenging the legality of algorithmic hiring systems under anti-discrimination law.

Healthcare provides one of the starkest examples of uncontestable automation risk. Algorithms used by major insurers, including UnitedHealthcare, have faced allegations of denying medically necessary care, with internal appeals reportedly overturned at high rates. In another widely cited case, a U.S. health-risk prediction algorithm systematically underestimated the needs of Black patients because it used healthcare cost as a proxy for health need. Researchers later demonstrated that this proxy embedded structural inequity into automated decisions.

These incidents underscore a critical truth: explainable AI may reveal why a decision occurred, but only contestability empowers individuals and institutions to challenge and change decisions that cause real-world harm.

Economic Risk Modeling: The Financial Case for Contestable AI

Contestable AI is not merely a compliance exercise; it is a financial risk mitigation strategy. When automated systems influence credit approvals, pricing, hiring, healthcare prioritization, or fraud detection, errors can scale instantly and amplify liability exposure across entire customer populations.

Enterprises that fail to embed contestability expose themselves to regulatory penalties, litigation risk, reputational erosion, insurance cost escalation, and shareholder scrutiny. Financial modeling must therefore quantify downside exposure from unchallengeable automated decisions, including potential enforcement fines, settlement costs, and operational disruption.

In 2026, boards increasingly integrate AI risk into enterprise risk management and capital allocation frameworks when evaluating digital investments.

- Quantify potential penalty exposure under regulatory regimes such as the EU AI Act.

- Model litigation probability linked to biased or erroneous automated decisions.

- Assess impact on insurance premiums, credit ratings, and investor confidence.

AI Incident Response: When Automated Decisions Go Wrong at Scale

Even rigorously validated models can fail under distribution shifts, corrupted data inputs, adversarial manipulation, or configuration errors. When failures occur at scale, automated decisions may affect thousands — or millions — of stakeholders within hours.

Enterprises must treat AI incidents as operational crises rather than technical anomalies. Incident response plans should define escalation chains, stakeholder communication protocols, regulatory notification requirements, and rapid rollback procedures.

During such failures, contestability mechanisms function as critical containment infrastructure, enabling pause, review, and corrective intervention.

- Establish AI-specific incident severity classifications.

- Maintain emergency rollback playbooks for high-risk systems.

- Conduct simulation exercises for systemic AI failure scenarios.

Vendor and Third-Party Model Accountability

Most enterprises rely on third-party AI vendors, APIs, foundation models, or embedded intelligence within enterprise platforms. However, even when development is outsourced, accountability remains with the deploying organization.

If a vendor model produces discriminatory, unsafe, or erroneous outcomes, the enterprise deploying it remains exposed to regulatory liability and reputational risk.

Governance frameworks must therefore extend beyond procurement boundaries to include contractual, operational, and technical oversight of third-party providers. Contestability pathways must function seamlessly across vendor ecosystems.

- Embed audit, transparency, and documentation clauses into vendor contracts.

- Require evidence of training data provenance, validation testing, and bias assessments.

- Define shared escalation and remediation protocols for cross-organizational disputes.

Board-Level AI Risk Literacy: Governance Starts at the Top

AI governance cannot remain confined to technical teams or compliance departments. Boards increasingly recognize AI as a strategic risk vector requiring structured oversight and informed supervision.

Directors must understand contestability mechanisms, fairness metrics, model risk classifications, and exposure thresholds. Without board-level literacy, governance becomes fragmented and reactive.

Strategic oversight ensures AI accountability aligns with enterprise risk management priorities, capital allocation decisions, and long-term corporate resilience.

- Develop executive dashboards summarizing contestation metrics, fairness variance, and exposure levels.

- Conduct periodic AI risk briefings for directors and audit committees.

- Establish independent AI oversight committees reporting directly to the board.

Cross-Functional Governance as a Structural Norm

Effective contestability cannot operate in silos. Episodic collaboration is insufficient. Data scientists, legal teams, compliance officers, risk managers, and product leaders must collaborate continuously to assess risk exposure and validate deployment decisions.

Through cross-functional review forums, organizations can institutionalize shared risk ownership and minimize blind spots. These structures strengthen pre-deployment risk assessment and clarify escalation pathways. Regular governance checkpoints create structured opportunities to challenge assumptions, validate fairness testing, and review edge cases before production release.

Such collaborative design strengthens AI accountability without unnecessarily slowing innovation. When governance is integrated early, it prevents costly post-deployment remediation.

- Establish recurring AI governance review councils.

- Require cross-team sign-offs for high-impact deployments.

- Maintain shared documentation repositories accessible across functions.

Actionable Implementation Framework: Turning Governance into Immediate Execution

The following framework translates governance theory into operational execution. It is designed for immediate enterprise adoption and structured as a 90-day activation roadmap. Each phase produces measurable artifacts that strengthen AI accountability while preserving delivery velocity and business continuity.

Phase 1 (Days 0–15): System Exposure Mapping

The first step is to create a structured inventory of all AI systems influencing financial, reputational, operational, or access-related outcomes. This is not a technical audit; it is a risk exposure mapping exercise aligned with enterprise governance priorities and regulatory sensitivity.

Deliverables

- A ranked AI system register categorized by business impact and regulatory risk.

- Identification of high-risk decision points requiring formal contestability pathways.

Ownership mapping that assigns accountable executive and operational leaders.

This phase establishes visibility into where governance controls must be embedded first and clarifies responsibility before accountability gaps emerge.

Phase 2 (Days 15–30): Decision Traceability Activation

Activate production-level decision traceability across existing deployments without disrupting active business operations. The objective is to ensure that every automated output can be reconstructed, reviewed, audited, and evaluated retrospectively to support internal oversight, regulatory inspection, and appeal resolution.

Deliverables

- Immutable logging configuration for high-impact models.

- Standardized metadata schema capturing model version, training dataset reference, input class, decision thresholds, and output.

- Internal audit validation confirming logging completeness and evidentiary integrity.

- Traceability transforms explainability artifacts into legally defensible evidence and audit-ready documentation.

Phase 3 (Days 30–45): Contestation Workflow Deployment

Design and deploy structured review pathways allowing stakeholders — internal and external — to challenge automated decisions. This includes clearly defined escalation channels and review authorities.

Deliverables

- Predefined risk thresholds triggering mandatory human oversight.

- SLA-bound appeal resolution timelines with documented review procedures.

- Override authorization matrix specifying escalation hierarchy and decision authority.

- This phase operationalizes procedural fairness and strengthens institutional accountability by converting transparency into enforceable intervention capability.

Phase 4 (Days 45–60): Fairness Stress Testing and Bias Controls

Introduce structured stress simulations across demographic, behavioral, and geographic segments. The objective is to identify systemic bias, disparate impact, or performance drift before exposure escalates into reputational damage or regulatory scrutiny.

Deliverables

- Cross-segment performance variance and disparate impact reports.

- Drift alert thresholds embedded into continuous monitoring systems.

- Escalation protocol for fairness deviations with remediation triggers.

- This phase institutionalizes algorithmic fairness as a continuous governance control rather than a one-time compliance exercise.

Phase 5 (Days 60–75): Independent Validation and Challenge Sessions

Conduct structured independent review sessions in which separate model risk or governance teams evaluate assumptions, data provenance, fairness testing methodologies, and model behavior under edge-case scenarios.

Deliverables

- Documented validation reports signed by independent reviewers.

- Risk classification updates reflecting validation findings.

- Remediation backlog prioritized by materiality, impact, and regulatory exposure.

- Independent validation strengthens credibility, defensibility, and audit resilience.

Phase 6 (Days 75–90): Executive Dashboard and KPI Integration

Convert operational controls into executive-level performance indicators. This elevates AI governance to the board level and integrates accountability into enterprise risk reporting. By making governance visible at the strategic layer, oversight becomes measurable rather than symbolic.

Core KPIs

- Percentage of AI systems with active contestation pathways.

- Average appeal resolution time and override frequency.

- Fairness variance index across monitored segments.

- Model rollback frequency and remediation response speed.

- Executive dashboards transform governance controls into measurable enterprise discipline and strategic risk indicators.

Immediate Strategic Insight

Governance based solely on documentation fails under real-world pressure and regulatory scrutiny. Organizations must shift toward execution-driven governance that embeds oversight directly into operational systems.

The actionable framework above shifts focus from conceptual alignment to enforceable operational control.

By implementing exposure mapping, decision traceability, contestation workflows, fairness stress testing, independent validation, and executive KPI integration, enterprises convert AI governance frameworks into measurable safeguards that reduce legal exposure and enhance institutional trust.

The execution layer transforms explainable AI and AI contestability from policy language into production-grade resilience.

Conclusion

In 2026, enterprises require both explainable AI and AI contestability to achieve defensible, accountable automation. While explainability provides insight into model reasoning, contestability enables structured challenge and correction. Regulatory regimes such as the EU AI Act demand enforceable oversight, documented traceability, and measurable governance controls.

By deploying truly contestable AI systems, organizations can embed fairness monitoring, version control, appeal workflows, and independent validation into their architecture. They operationalize algorithmic fairness, strengthen AI accountability, and align governance with compliance mandates.

To lead responsibly in high-impact AI environments, organizations must design systems that can be questioned, reviewed, and improved. This approach transforms AI governance from reactive compliance into strategic resilience.

FAQ

What is the difference between AI explainability and AI contestability?

AI explainability answers the question “why did the system produce this output?” — it makes model decisions transparent and traceable. AI contestability goes one step further: it gives affected parties the ability to challenge, appeal, and trigger a genuine review of that decision. A system can be explainable without being contestable — clear outputs do not automatically mean anyone can dispute them.

Do enterprises need both AI explainability and contestability to comply with the EU AI Act in 2026?

Yes. The EU AI Act — fully enforceable from August 2026 — requires high-risk AI systems to be both transparent and contestable. Explainability satisfies the traceability and audit trail requirements, while contestability fulfils the obligation to give affected individuals a meaningful right to challenge automated decisions. Enterprises that address only explainability remain partially non-compliant, facing fines of up to €35 million.

Which industries are most exposed to AI contestability and explainability risk in 2026?

Financial services, healthcare, HR and recruitment, public safety, and insurance carry the highest exposure. These sectors use AI to make decisions that directly affect individuals — credit scoring, medical triage, hiring, fraud detection — where both regulators and courts now expect full auditability and a clear appeals path. Enterprises in these verticals without both frameworks in place face the greatest regulatory, legal, and reputational risk.

Can an AI system be explainable but not contestable?

Yes — and this is the critical governance gap most enterprises overlook. A model can generate fluent, readable explanations for every decision while offering no mechanism for a user to challenge the underlying reasoning, trigger a human review, or seek redress. Explainability without contestability produces the appearance of accountability without the substance of it. In high-stakes domains, this distinction has direct legal and ethical consequences.

How should enterprises build an AI contestability framework in 2026?

A practical enterprise contestability framework covers four dimensions: human-centered interfaces that allow users to raise challenges easily; technical architecture that logs reasoning chains and supports audit; legal and policy workflows that define escalation paths and decision review timelines; and organisational governance that assigns accountability for outcomes. Building on top of an existing explainability layer — audit trails, influence scoring, decision logs — is the fastest path to compliance.