Enterprise AI adoption has reached a defining inflection point in 2026. Organizations that have successfully navigated the journey from AI pilot to production are unlocking transformational business value — while those still trapped in experimentation cycles fall further behind. Understanding this shift is the first step toward building an effective AI integration strategy.

- Enterprise AI Adoption at the inflection point:Why 2026 is different

- Understanding the Pilot-to-Production Gap

- The “Science Project” Trap

- AI Integration Strategy Gaps That Block Scaling

- Reframing Enterprise AI ROI: Beyond Model Metrics

- From Labs to Operations: Enterprise AI Adoption Mindset

- From Isolated Models to Enterprise Platforms

- AI Integration Strategy as Value Creation for Enterprise AI Adoption

- AI Governance as a Scaling Enabler for Enterprise AI

- Organizational Maturity: The Human Side of Scale

- A Phased AI implementation roadmap to Production Scale Building your enterprise AI Foundation

- The Economics of AI at Scale: From CapEx Experiment to OpEx Discipline

- Data as the True Moat: Quality, Lineage, and Ownership

- From Automation to Augmentation: Redesigning Work Itself

- Measuring What Matters: Beyond Accuracy to Enterprise Impact

- Conclusion

- FAQ

The last 10 years have marked a remarkable shift for Artificial Intelligence. AI is no longer confined to research labs. It has evolved into a core component of daily operations. It has become a central strategic priority for enterprises. That said, most organizations still find it challenging to convert AI potential into industrial productivity. While global enterprises are making unprecedented investments in models, cloud infrastructure, and generative AI capabilities, many continue to struggle to convert experimentation into enduring business impact.

More than model capability, the real bottleneck is organizational readiness.

In 2026, enterprises are encountering a structural inflection point. In the early phase of Enterprise AI adoption was defined by pilots, proof-of-concepts, and innovation labs. Today, competitive advantage is determined by operational scale – embedding AI into workflows, governance systems, cost structures, and enterprise architecture.

The shift underway is fundamental:

- From experimentation to institutionalization

- From model accuracy to measurable enterprise AI ROI

- From isolated innovation to integrated infrastructure

- From short-term enthusiasm to long-term discipline

To achieve tangible success in this phase, organizations do not just need the most advanced algorithms. They must build a resilient foundational environment capable of supporting production-grade AI – aligning data maturity, governance, architecture, economics, and organizational design around scalable AI systems.

In this post, we will explore why 2026 represents a strategic turning point – and how organizations can truly move from pilot purgatory to production power.

Enterprise AI Adoption at the inflection point:Why 2026 is different

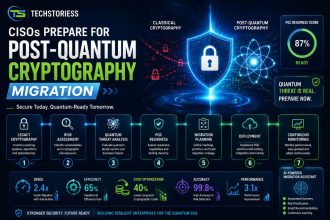

Despite rising investments and widespread experimentation, translating early AI success into sustained, production-scale business impact remains a formidable operational challenge. Industry research consistently highlights the persistent gap between ambition and execution. According to KPMG, although 70%–87% of enterprises have launched AI initiatives, only a small fraction successfully scale them into long-term production systems that generate measurable business value.

This reality underscores a critical truth: it is relatively easy to experiment, but far harder to operationalize. Simply refining models is not enough to move from AI pilot to production. It demands resilient infrastructure, governance discipline, cross-functional alignment, and long-term economic sustainability.

To ensure successful transition from AI pilot to production, enterprises today must adopt a well-structured AI implementation roadmap that seamlessly syncs infrastructure readiness, governance maturity, and measurable enterprise AI ROI expectations from the outset.

Often described as “pilot purgatory,” this structural bottleneck defines the current transition phase. In 2026, enterprises are shifting from isolated proof-of-concept experiments toward building sustainable, enterprise-grade AI platforms. Scaled AI is no longer an abstract ambition; it is directly tied to competitiveness, operational efficiency, and innovation capacity.

Leaders now face three stark realities:

High investment, limited realization:

Billions of dollars are invested annually in AI infrastructure, platforms, and tools, yet a significant portion of initiatives fail to generate measurable business value or reach production scale.

Executive optimism versus operational reality: While C-suite leaders remain confident about AI’s transformative potential, frontline teams grapple with integration complexity, data quality issues, governance gaps, scaling constraints, and unclear performance metrics.

The strategic imperative for operational discipline: The difference between failure and sustained success increasingly depends less on model sophistication and more on how effectively AI systems are embedded into daily workflows, decision cycles, and accountability structures.

To craft a coherent enterprise AI strategy, it is essential to understand why this inflection point matters – and how to move decisively beyond experimentation toward durable, measurable value creation.

Understanding the Pilot-to-Production Gap

The gap between a successful pilot and a production-ready AI system is far wider than assumed by most executives. As many as 73% of AI initiatives fail to progress beyond the pilot stage, leaving behind unused models, stranded budgets, and unmet expectations.

This gap reveals a systemic weakness in enterprise AI adoption, where organizations focus on experimentation instead of building a scalable AI integration strategy to support long-term production environments.

The “Science Project” Trap

Many AI pilots begin in controlled, near-academic environments. Teams experiment with carefully curated data, tightly managed test conditions, and polished dashboards that showcase impressive performance metrics. However, applications that excel in a sandbox environment rarely withstand the messy complexity of real-world operations.

In controlled pilots:

- Cleaner, well-structured data is readily available compared to noisy, fragmented live systems.

- Integration with core enterprise systems such as billing platforms, CRM systems, and supply chain tools is simulated or minimal.

- Engineers manually fine-tune parameters and workflows in ways that are not sustainable at scale.

Once exposed to real operational data – characterized by unknown noise, shifting inputs, incomplete records, and unpredictable workloads – these pilots often lose accuracy and stability. This pattern – models excelling in controlled environments but failing under real-world pressure – is a primary reason why only about one in five AI pilots successfully scales into production.

AI Integration Strategy Gaps That Block Scaling

Enterprise AI integration strategy goes much beyond “just engineering”; it is a foundational requirement to transition from AI pilot to production and enable sustainable AI scaling production across complex enterprise systems.

For instance, a customer churn prediction model may demonstrate excellent accuracy in a test environment, but it delivers little value unless it securely and reliably connects to the enterprise’s operational systems. A robust AI integration strategy requires rethinking how systems communicate, how data flows are governed and secured, and how predictions automatically trigger business actions.

To meaningfully operationalize AI, organizations need robust API frameworks, real-time data pipelines, and cross-platform integration capabilities. Many enterprises lack these foundational elements, making it difficult to embed AI into workflows that drive measurable outcomes.

Undefined Ownership & Operational Drift

AI pilots are often led by highly skilled IT teams, data scientists, or innovation labs. But once the pilot phase concludes, accountability for monitoring, retraining, governance, and incident response frequently becomes unclear. Without defined ownership, model performance gradually degrades, feedback loops weaken, and operational trust erodes.

Until a dedicated function – whether DevOps, IT operations, or a formal AI Ops team – assumes clear responsibility, AI systems drift, decay, and eventually lose business relevance.

In the absence of clearly defined ownership models embedded within AI governance frameworks, enterprises face challenges in sustaining trust, accountability, and long-term system performance in production environments.

Reframing Enterprise AI ROI: Beyond Model Metrics

Traditional pilot metrics emphasize abstract model performance indicators such as accuracy, precision, recall, and F1 score. However, business leaders and investors demand tangible business outcomes – revenue uplift, cost reduction, risk mitigation, and productivity gains. Gartner research indicates that the absence of clearly defined, measurable business value in production is one of the leading reasons why nearly 30% of AI pilots stall. Here the enterprise AI ROI needs to be reframed – not as a post-deployment metric, but as a core design principle integrated into the AI implementation roadmap right from the earliest stages.

To convert pilot success into production-scale impact, organizations must shift their focus from technical validation to business performance outcomes. ROI must be defined in commercial and operational terms – not merely in model performance metrics.

From Labs to Operations: Enterprise AI Adoption Mindset

To achieve successful enterprise AI scaling production benefits, organizations cannot rely on individual, specific models or isolated use cases. They need a complete menu of capabilities and systems that can consistently meet the volume and complexity of daily operations. Pilots are controlled experiments that receive careful attention and manual tweaks to gain optimum results. Production, however, is an operational environment that demands systems, automation, controls, quality assurance, and repeatability. To achieve this transition enterprises must align their AI adoption efforts with a long-term vision for scaling AI to production rather than treating pilots as isolated technical issues.

From Isolated Models to Enterprise Platforms

Enterprises treating AI initiatives as isolated innovation pockets rather than integrated components of the complete technology stack often struggle to sustain performance and scale outcomes. Successful organizations, on the other hand, design resilient, end-to-end production systems that treat AI models as modular, composable services – that can seamlessly integrate with APIs, monitoring, and governance.

A product mindset: Treating AI outputs as products, with defined performance standards, SLAs, and user expectations.

Governance discipline: Ethical guardrails, bias checks, and compliance integration baked into process design.

Gartner data suggests that organizations that adopt a product-oriented approach toward AI initiatives, with clear ownership and KPIs, are 4.2× more likely to scale successfully than those without integration planning.

This platform-centric approach is crucial for organizations looking to mature their AI integration strategy and operationalize AI across various key business units.

Project Thinking vs. Product Thinking

Unlike pilots that often operate as projects – launched, showcased, and then closed – production demands ongoing development cycles, maintenance planning, feature upgrades, and lifecycle governance. This is the fundamental organizational shift that demands executive backing, cross-functional alignment, and investment in architectural discipline. Product thinking also helps in better tracking of enterprise AI ROI, as systems are constantly optimized against business outcomes rather than focusing on one-time project success metrics.

The Production Foundation: Industrial-Grade MLOps

Machine Learning Operations (MLOps) holds the central position in scaling AI. It is the practice of applying disciplined software engineering principles to the full ML lifecycle, from data ingestion to deployment and ongoing monitoring. MLOps are a crucial part of the execution that help organizations to transition from AI pilot to production while supporting consistent, repeatable AI scaling production across multiple use cases.

Version Control Across the Board

Unlike conventional software where version control is standard for code, in AI systems the scope expands to include other critical artifacts like:

- Datasets and their lineage

- Model parameters and versions

- Performance metrics and drift indicators

Tools like MLflow, DVC, and Weights & Biases help orchestrate reproducibility and auditability, ensuring that every model version can be traced and rolled back if necessary. This minimizes risk and accelerates confidence in production deployments.

Automated Pipeline Orchestration

Manual procedures like periodically retraining models by hand or reconfiguring environments fail to scale in complex enterprise environments. Automated pipelines employing tools like Airflow, Kubeflow, or proprietary platforms can orchestrate training, validation, deployment, and monitoring in systematic and repeatable ways.

Continuous monitoring is vital:

- Track performance against real-world data

- Detect data drift (when input data distribution shifts)

- Detect concept drift (when relationships between inputs and outputs change)

This ongoing vigilance ensures that models continue to retain their accuracy and relevance over time. Without it, models degrade rapidly and decisions become unreliable.

Strategic Technology Integration

In production settings, these technical practices are the foundation of repeatable and reliable AI systems – systems that evolve beyond handcrafted experiments into robust, enterprise-grade solutions.

Architectural Considerations for Scale

It is essential to choose the right architecture to support production-grade AI. This is not just about “technical implementation” – it involves designing for unpredictable workload patterns, multi-tenant operations, security requirements, and compliance constraints.

AI Integration Strategy as Value Creation for Enterprise AI Adoption

To achieve tangible success with AI, organizations need to understand that models don’t create value – integration does. A well-defined AI integration strategy acts as the primary driver of value realization and a crucial determinant of successful enterprise AI adoption at scale.

In a McKinsey global AI survey, despite 55–60% of organizations using AI in at least one function, very few are able to achieve significant business impact. Even highly advanced models fail to deliver results in the absence of operational embedding.

To scale in real environments, AI needs to move beyond analytical dashboards. It must:

- Impact decisions in real workflows

- Trigger automated actions in real time

- Feed downstream systems

- Operate within defined enterprise SLAs

Embedding AI into Business Workflows

Scaling AI requires moving from “insight delivery” to “decision orchestration.”

For example:

- Fraud detection models must integrate seamlessly with transaction approval systems.

- Demand forecasting models must feed procurement engines.

- Marketing propensity models must trigger real-time campaign automation to increase conversion rates and customer engagement.

Gartner highlights that by 2026, over 70% of enterprise AI value will be derived from AI embedded into operational workflows rather than standalone analytics.

This level of embedding is crucially important to achieve meaningful enterprise AI ROI, as tangible value is realized only when AI influences real decisions and actions.

Treating AI Outputs as Data Products

AI predictions should be treated as governed data assets – versioned, documented, and monitored:

- Timestamped

- Logged

- Quality-monitored

- Auditable

This ensures downstream reliability. It also supports compliance and post-incident traceability.

SLAs and Reliability

Production AI requires:

- Defined uptime expectations

- Latency guarantees

- Incident response playbooks

- Clear accountability structures

As AI influences customer decisions, it becomes infrastructure. At scale, it cannot behave like a research artifact.

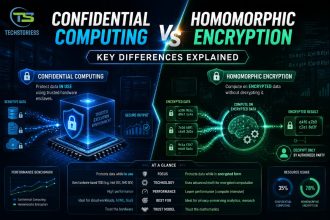

AI Governance as a Scaling Enabler for Enterprise AI

Governance is widely misunderstood. It is not friction – it is the mechanism that makes scale sustainable. Strong AI governance frameworks — incorporating responsible AI principles and AI risk management protocols — are increasingly becoming a prerequisite for safely scaling AI, ethically, and sustainably across enterprise environments.

The EU AI Act, U.S. executive guidance on AI risk management, and emerging ISO standards signal a shift in regulatory expectations: production AI must be controllable, explainable, and accountable. AI compliance is no longer optional — it is a production requirement.

Defining Production KPIs

Model accuracy should not be the sole metric to measure production AI. Mature enterprises track metrics like:

- Revenue lift

- Cost savings

- Risk reduction

- Cycle time reduction

- Customer retention impact

BCG research discovers that companies that tie AI to explicit business KPIs are nearly 2× as likely to achieve significant AI business value.

Forward-looking enterprises are establishing:

- Cross-functional AI oversight boards

- Risk classification frameworks

- Model approval workflows

- Escalation mechanisms

This minimizes shadow AI and synchronizes technical execution with enterprise risk tolerance.

Bias, Explainability, Regulatory Alignment

Scrutiny intensifies as models influence lending, hiring, pricing, and healthcare decisions.

A 2023 IBM study revealed that over 75% of executives acknowledged the importance of AI governance in long-term competitiveness, yet fewer than 30% report having robust frameworks in place.

Organizations must embed governance into production scaling to avoid reputational damage and regulatory penalties.

Cost Transparency

Due to token consumption, GPU time, and inference load, generative AI and LLM deployments introduce unpredictable cost curves.

Enterprises now require:

- Cost per inference tracking

- Model efficiency benchmarking

- ROI dashboards tied to infrastructure usage

To achieve financial sustainability during scaling, cost transparency is essential.

Organizational Maturity: The Human Side of Scale

AI Centers of Excellence (CoE) vs. Federated Models

Two dominant models have emerged:

- Centralized AI CoE that controls standards and tooling

- Federated model enabling domain teams with centralized guardrails

According to McKinsey research, hybrid models – central governance with distributed execution – tend to outperform purely centralized structures.

Cross-Functional Collaboration

Production AI requires seamless collaboration between:

- Data scientists

- ML engineers

- DevOps

- Security teams

- Legal and compliance

- Business stakeholders

- Siloed teams introduce deployment bottlenecks.

Leadership Accountability

- Enterprises that succeed in AI scaling typically:

- Assign executive ownership

- Tie compensation to AI performance metrics

- Embed AI into corporate strategy documents

These structures smartly formalize AI governance while enabling faster, smoother and more confident enterprise AI adoption across multiple departments.

A Phased AI implementation roadmap to Production Scale Building your enterprise AI Foundation

Sudden transformation may appear ambitious but often fails in execution. Scaling sustainably requires staged maturity. A well-structured AI implementation roadmap guides organizations from siloed experimentation toward scalable, production-grade AI systems.

Phase 1: Assess & Align – Building a robust AI implementation foundation

- Inventory existing AI pilots

- Identify high-value use cases

- Align stakeholders on measurable business outcomes

- Define AI governance structure

- This phase prevents uncontrolled experimentation.

Phase 2: Build the Foundation – Frame your infrastructure and AI governance

- Implement MLOps pipelines

- Establish data quality standards

- Define model monitoring protocols

- Deploy version control systems

- This stage converts isolated experiments into managed systems.

Phase 3: Launch & Learn Validate your strategy for AI governance

- Deploy selected high-impact use cases

- Monitor ROI metrics

- Stress-test AI governance workflows

- Measure operational stability

- Here, iteration helps build confidence.

Phase 4: Scale & Institutionalize- Achieving the Full AI production scaling

- Standardize tooling across teams

- Expand AI deployment portfolio

- Formalize AI budget allocation

- Integrate AI into strategic planning

At this stage, organizations achieve mature AI production scaling, where enterprises standardise, govern, and deeply integrate AI capabilities into enterprise operations to accelerate efficiency and achieve a sustainable growth.

The Economics of AI at Scale: From CapEx Experiment to OpEx Discipline

Most AI pilots are treated as innovation experiments and lack structured financial governance. Loose budgets, unclear success goals, and undefined accountability are common. In contrast, production AI demands ongoing infrastructure costs, inference workloads, retraining cycles, monitoring overhead, and vendor dependencies. Financial discipline is essential to sustain enterprise AI ROI, especially as organizations move from pilot investments to large-scale operational deployments.

Data as the True Moat: Quality, Lineage, and Ownership

In their eagerness to adopt advanced AI models, enterprises often ignore a crucial reality: unlike models, proprietary data is not replaceable. It is the foundation of sustained AI success and differentiation.

More than algorithmic sophistication, the competitive advantage of AI at scale is determined by:

- Unique data assets

- Clean data pipelines

- Accurate labeling

- Strong lineage tracking

- Responsible stewardship

Data Lineage and Observability

With rapidly scaling AI systems, enterprises must understand the origins of data – and how it has been processed, enriched, and modified across pipelines – to ensure regulatory compliance, audit readiness, and effective debugging.

Strong data foundations directly accelerate AI scaling in production by ensuring models stay accurate, reliable, and contextually relevant in dynamic and evolving environments.

From Automation to Augmentation: Redesigning Work Itself

In automation initiatives, workflow redesign is one of the key challenges that is often overlooked.

Enterprises frequently layer AI onto existing processes without reviewing how work itself should evolve.

In reality, AI scaling requires process transformation.

Human-in-the-Loop Systems

Production AI often delivers the best results in human-in-the-loop environments. Instead of replacing employees, enterprises need to redefine roles and responsibilities:

- AI generates recommendations

- Humans validate or override

- Feedback improves model accuracy

This creates a holistic learning cycle that allows enterprises to evolve with AI without workforce disruption.

This shift is crucial for improving enterprise AI ROI, as human-AI collaboration often delivers more sustainable AI business value than full automation.

Effective change management is a key enabler of enterprise AI adoption, ensuring that technological investments translate into real organizational impacts.

Measuring What Matters: Beyond Accuracy to Enterprise Impact

Conventional ML metrics like precision, recall, and AUC are insufficient for enterprise-scale measurement.

Mature organizations wisely align these metrics with their broader AI implementation roadmap to ensure continuous optimization and accountability.

Conclusion

In 2026, the true achievers will be those that successfully cross into stages four and five – where AI shifts from an experimental initiative to a core capability seamlessly integrated into the operating model.

The lesson is clear. You don’t just need smart algorithms to scale AI. It requires an entire environment comprising smarter systems, disciplined economics, mature AI governance, and aligned organizational design.

Ultimately, success in 2026 depends on the successful integration of AI governance, executing a clear AI implementation roadmap, and sustaining measurable enterprise AI ROI while advancing toward full AI scaling production.

That is how enterprises transition from pilot purgatory to production power – and from experimentation to competitive advantage.

FAQ

What is pilot purgatory…

is a definition-format answer, the exact structure Google pulls into AI Overviews and PAA boxes. The 73% statistic makes it immediately citable by LLMs like Perplexity and ChatGPT

How do you build an AI integration strategy…

targets the highest commercial-intent query in your set. “How to” framing is the #1 trigger for featured snippets. Embedding both the primary and secondary KW in the question text itself doubles the PAA eligibility.

What are the key phases…

is the single strongest snippet candidate of the five. The numbered list format (1, 2, 3, 4) is exactly what Google’s featured snippet algorithm favors for “roadmap” and “phases” queries. It also serves GEO well because LLMs prefer structured, step-by-step content when synthesizing answers.

How should enterprises measure AI ROI…

targets a CFO/VP-level search intent that your article addresses strongly but doesn’t currently isolate. Named citations (BCG) inside the answer increase GEO citability significantly.

Why is AI governance a prerequisite

is timed well given the EU AI Act enforcement cycle. Regulatory-adjacent questions are increasingly surfaced in AI Overviews, making this a strong GEO candidate. The answer is structured to be a direct, defensible claim — exactly what AI engines look for when selecting citations.